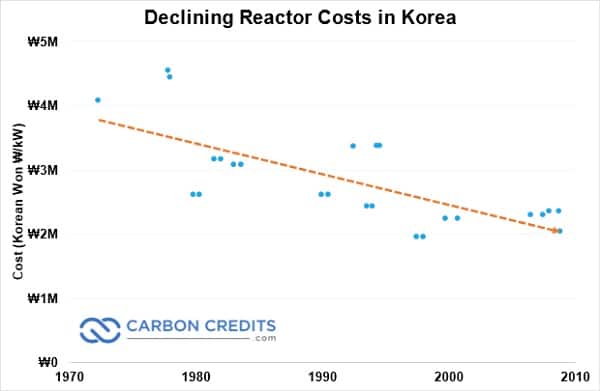

- Over the course of building twenty-eight reactors from 1971–2008, the average cost decreased by 50 percent.

In fact, that decline is similar to Germany’s experience with solar panel pricing over the same period.

France experienced a similar performance, with nuclear construction costs dropping from 1960–1970.

After that, costs remained relatively level as they built out a nuclear fleet that powers more than 70 percent of the country.

So the United States—the nuclear power pioneer—should be building nuclear plants cheaper than anyone else, right? Also wrong.

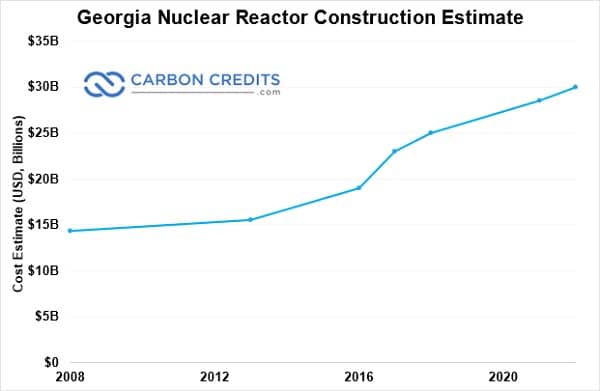

In fact, a few years ago, two reactors in South Carolina were cancelled after their estimated price tag skyrocketed from $9.8 billion to $25 billion.

This year, two reactors under construction in Georgia saw their expected costs pushed once again—this time, to $30.3 billion.

The massive amounts of carbon-free electricity generated by nuclear power is coveted by utilities, just not with massive price tags attached. They believe that nuclear energy and net zero are so compatible. And they seemed to be right.

But here’s the thing: even the starting price tags on those U.S. nuclear reactors have risen by hundreds of percent over the past few decades.

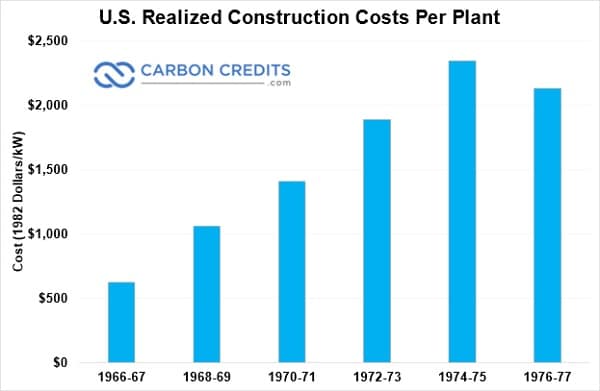

Now, nuclear is not expensive on its own. And most of the plants that are in operation now were relatively cheap when they were built. In fact, in the early days of nuclear, prices were on a steady downward trend similar to that of wind and solar.

As each successive nuclear reactor was built, the price naturally dropped.

That’s due to a natural phenomenon known as the “learning rate.”

First-Mover Disadvantage

A learning rate is the rate at which a technology decreases in cost as it increases in use.

For example, a learning rate of 10% means that every time installed capacity doubles, the price drops by 10%.

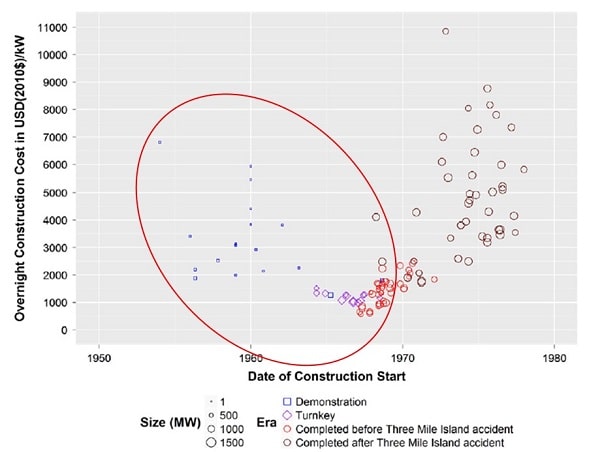

Until 1970, the learning rate for nuclear in the United States was a fantastic 23 percent. In other countries, it was high as 35%.

- If those learning rates had continued through 2015, nuclear reactors would be less than one-tenth of their current cost.

And if the accelerated deployment of nuclear through the mid-‘70s had continued…

-

Nuclear would have replaced the U.S.’s entire coal and gas-powered electricity by 2015 and help the country’s race to net zero.

It also would have avoided more than 150 GT of CO2 emissions, as well as about ten million deaths from that pollution.

Only it didn’t. Because sometime around 1970, the learning rate broke. And the price started to rise for every additional reactor built.

Suddenly, learning rates around the world became negative—the United States dropped to – 94%.

Part of the problem was that too many nuclear plants were being built at once.

Demand for nuclear plants skyrocketed in the late ‘60s, causing supply chain issues for both skilled craftsmen and huge, complex nuclear reactor components.

Prices on even simple parts and labor skyrocketed.

More importantly, if a part or worker was unavailable when they were needed, construction was delayed. And since utilities were financing the debt, every additional day meant additional millions of dollars in interest.

But that’s only part of the picture.

A New Expensive Point of View

In the early days of nuclear, every project was “First of a Kind”—or FOAK.

No manufacturer or developer had experience building new nuclear reactors.

- In fact, the first nuclear reactors in Israel and Pakistan were built by a manufacturer of bowling equipment.

So with dozens of plants being brought online all at once, it was a time of learning the wrong way to do things. Then implementing insanely expensive fixes to plants still under construction.

For example, tectonic plates weren’t even discovered until 1967. Suddenly, dozens of plants under construction had to be retroactively earthquake-proofed.

Reactors being built in the 1970s in the U.S. were in an environment of constant change that made controlling or even knowing costs impossible.

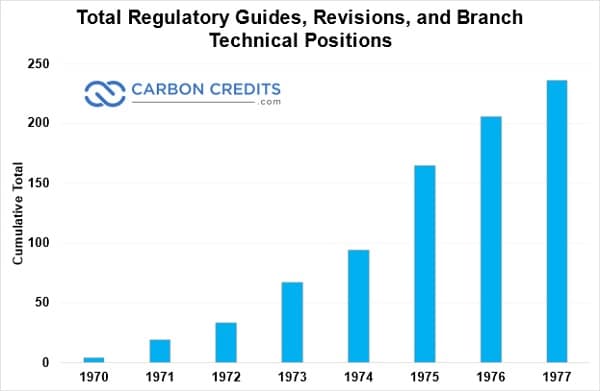

Each new snag or accident at an operating reactor introduced a new regulation or standard.

- Between 1970 and 1978, the number of engineering standards nuclear plants had to abide by soared from 400 to more than 1,800.

- During the same time period, regulatory guides and positions from the Nuclear Regulatory Commission increased from 4 to… 304.

Every time a new guide or standard was released, every reactor under construction had to be brought up to code.

And every minor change risked a “ripple effect” that could force an overhaul of entire related systems.

For example, the David-Besse reactor was budgeted for $136 million when construction started in 1967; when it was finished a decade later, the price tag was $650 million.

A study found that modifications (and their chain effects) forced by the Nuclear Regulatory Commission were responsible for more than $400 million of that cost.

While the learning curve may have broken, this was the system working.

As operating experience built up, reactors became insanely safe—in fact, the safest form of energy on the planet.

The Nuclear Bomb for Nuclear Power

Then the real bomb dropped.

A partial meltdown of a reactor at the Three-Mile Island (TMI) plant in 1979 threw the entire nuclear industry and its net zero potential into disarray.

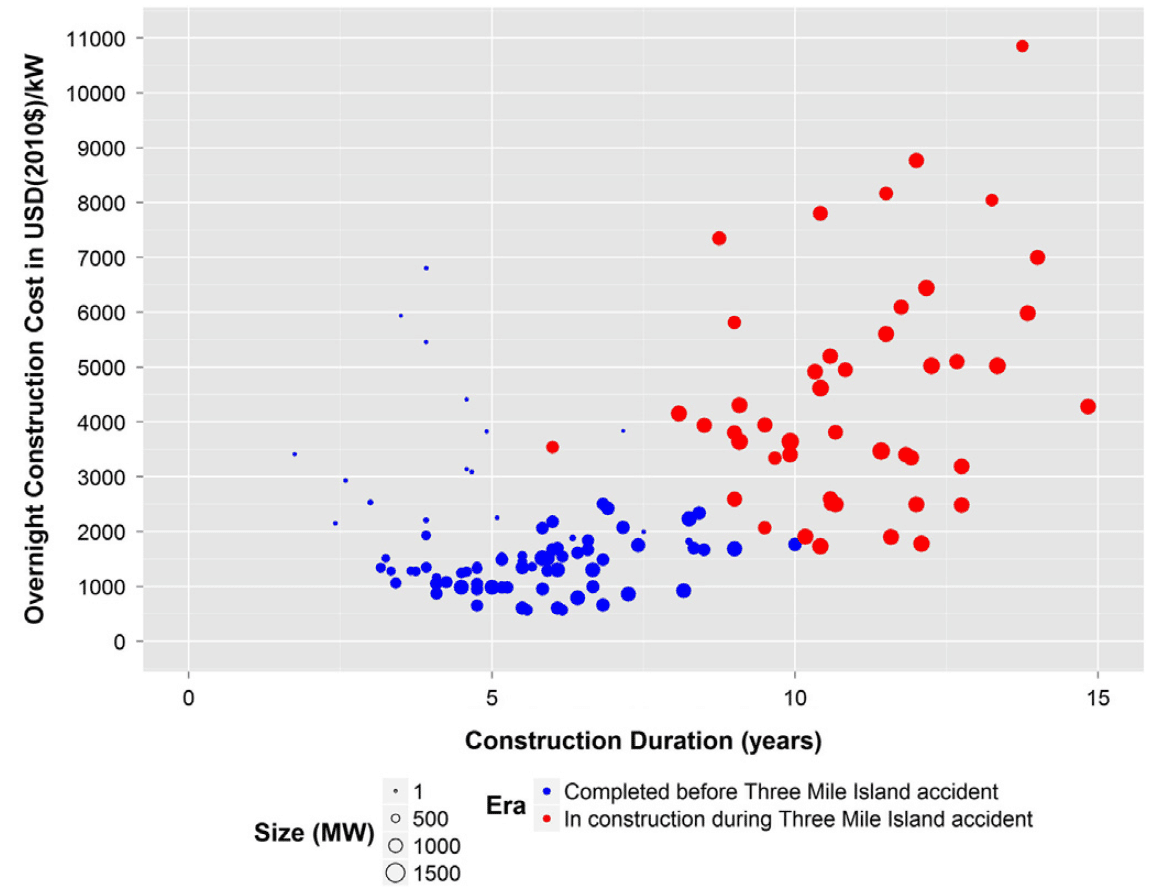

Overnight, the construction costs and timelines of nuclear plants spiraled out of control.

For the fifty-one reactors under construction when TMI occurred, regulatory delays and retrofit requirements were everywhere.

Median costs soared nearly 300 percent—more than $7,000/kW for some reactors.

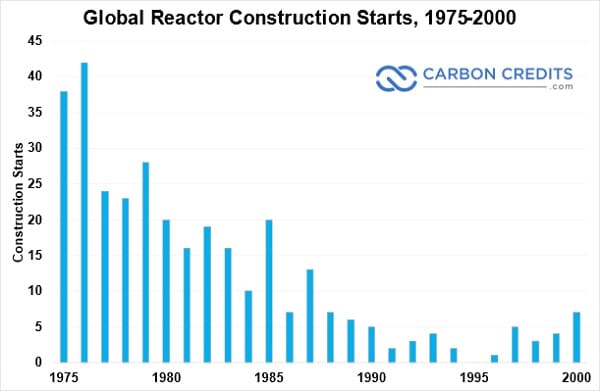

Applications for new nuclear reactors evaporated instantly.

And more than 120 reactor orders were cancelled—more than the entire current U.S. nuclear fleet.

With no new reactors being built, the learning rate was completely dead.

And the price of nuclear construction had no way of going down.

From the mid-‘80s until now, every nuclear project has been another ultra-high-cost FOAK.

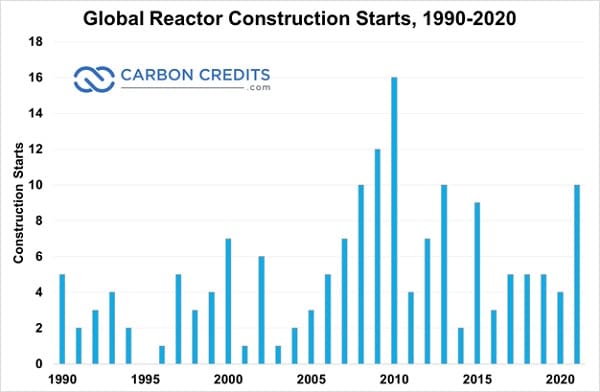

But here’s the good news: The United States is beginning to return to its pre-disruption deployment and learning rates.

- And the learning curve is quickly restarting in the United States.

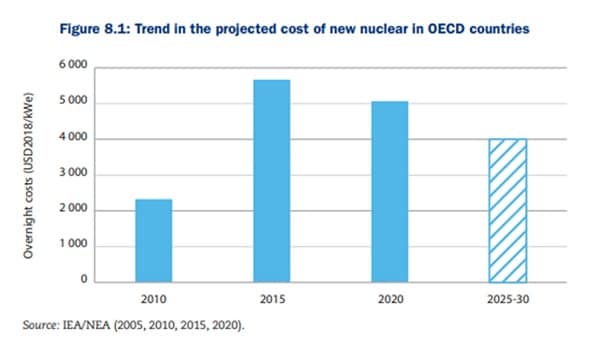

As new constructions rise, the cost is already dropping and is set to drop more than 30 percent from 2015–2030.

If the U.S. invests about $1 trillion into nuclear energy by 2050, it could supply more than 3,500TWh of energy per year.

- Nuclear would provide 85 percent of current energy consumption—all carbon-free—for less than half of the CARES Act stimulus.

And the cost of nuclear per MWh would drop by about 60 percent, which would have been great for the clean energy and net zero transition.

Studies have found that there is nothing inherently expensive about nuclear.

And as the learning curve comes alive again, nuclear is set to get much, much cheaper.